Review of AI Vision NYC by Hengchang (Alan) Liu (hl5843@nyu.edu)

Photographs by Clone Wen (cw4295@nyu.edu)

Building on the momentum of its Sydney gathering last November, Canva returned to the stage on March 11, 2026, to host AI Vision NYC <click to watch the recording>. This industry event at Spring Studios brought together speakers from organizations including Canva, OpenAI, Anthropic, Runway, Pinecone, Later, and the University of Sydney. Framed around the future of creativity, work, and technological change, the event was less concerned with presenting AI as a spectacular novelty than with asking how AI can be made workable, sustainable, and socially meaningful in everyday practice. The central issue was no longer whether AI could produce impressive outputs, but how AI was being integrated into workflows, institutions, and forms of cultural production.

Through discussions of usability, trust, creative production, and leadership, the speakers pointed toward a post-hype moment in which the larger issue is how AI reorganizes the conditions under which people work, create, communicate, and make decisions. Scroll down for full report.

Usable AI and Workflow Adoption

Extending the event’s central theme of practical integration, one of the clearest concerns at AI Vision NYC was whether AI can become a tool people repeatedly return to in ordinary professional settings.

This question emerged most explicitly in the panel “AI That People Actually Use.” Tim Moore (CEO, VŪ TECHNOLOGIES) approached this issue in terms of concrete value, emphasizing return on investment and time saved. In his account, the success of AI depends less on technical spectacle than on whether it reduces friction in the work process. Jenny Wen (Head of Design, Anthropic) made a related point from a design perspective, arguing that effective AI must “live beyond the demo.” Her formulation was particularly revealing because it identified a broader condition of the current AI moment: as generative systems become easier to access, the existence of a compelling demonstration no longer guarantees meaningful adoption. The central issue is continuity rather than novelty—whether a tool can be integrated into repeated patterns of use.

This emphasis on use also revealed that usability is not a neutral category. To describe AI as “usable” already implies assumptions about users, labor, and interface design: what kinds of effort should be minimized, which forms of complexity should remain visible, and which decisions should be delegated to the system. Several speakers returned to the idea that successful AI should make “simple things simple” while making “hard things possible.” Such formulations appear straightforward, yet they point to an important tension. The simplification of interaction often depends on embedding particular defaults into the interface, quietly structuring how users engage with the system while limiting the visibility of its underlying operations.

For this reason, AI adoption is inseparable from interface politics. A system becomes sustainable not merely because it functions well, but because it organizes user behavior into manageable, repeatable forms. The future of AI, as framed here, rests less on the production of ever more astonishing outputs than on the quieter achievement of becoming embedded infrastructure.

Efficiency vs. Expertise

As debate enters the academic realm, the keynote by Sandra Peter (Director, Sydney Executive Plus, The University of Sydney) pushed the discussion toward a more fundamental tension: whether the efficiencies promised by AI come at the cost of expertise. Within the generally optimistic rhetoric, she complicated a more cautious argument: AI may reduce friction in work, but it may also erode the forms of attention, judgment, and accumulated knowledge that make work meaningful and reliable in the first place.

Peter framed this issue through the problem of delegation. As AI systems become more capable, users are increasingly tempted to offload not only repetitive labor but also parts of interpretation, synthesis, and decision-making. This creates a contradiction at the center of AI adoption. On the one hand, organizations seek efficiency by using AI to compress time, automate minor tasks, and accelerate ideation. On the other hand, overextending this logic risks weakening the very human capacities that remain necessary for evaluating outputs, contextualizing information, and recognizing when a system is wrong. The danger, then, is not simply that AI replaces expertise, but that it gradually reshapes the conditions under which expertise is exercised and valued.

If AI tools are increasingly designed to feel intuitive and frictionless, then ease of use can quickly be mistaken for reliability. A system that appears efficient may still conceal shallow reasoning, generic outputs, or misplaced confidence. In that sense, the problem of expertise is also an epistemic one: when AI reduces visible effort, it becomes harder to determine whether a result is genuinely well-judged or merely quickly produced. Efficiency, in this context, is not a neutral gain but a shift in the relation between labor and evaluation.

The question is no longer whether AI should be used, but how to pursue efficiency without hollowing out the expertise needed to interpret, supervise, and contest machine-generated outcomes. The more successfully AI integrates into ordinary practice, the more urgent it becomes to ask what kinds of human knowledge may be diminished in the process.

Invisible AI and the Politics of Trust

Visibility emerged as a key term throughout the event, repeatedly generating tension and concern. This was especially clear in the first panel. Tim Moore (CEO, Vū Technologies) suggested that ideal AI systems should reduce friction and merge naturally into existing patterns of use, while Jenny Wen (Product Design Lead, Anthropic) similarly implied that AI may, over time, resemble earlier machine-learning systems that became infrastructural precisely because users no longer experienced them as overtly “AI.” In both cases, the aspiration was not to maintain AI as a constant spectacle but to make it ordinary. Once a system becomes routine, it no longer needs to justify itself through surprise.

At the same time, invisibility is not an uncomplicated good. Nefaur Khandker (Head of Product Design, Clay) offered the most important counterpoint by emphasizing that trust depends not only on smoothness, but on the user’s ability to understand what the system is doing. His intervention shifted the discussion away from seamlessness alone and toward a more difficult design problem: how much of the system should remain visible if users are to feel genuine confidence rather than simply submit to convenience?

This contradiction matters because invisibility is never merely a technical achievement; it is also a political arrangement. The more a system withdraws from view, the more difficult it becomes to inspect its defaults, assumptions, and internal forms of mediation. What feels effortless at the interface level may depend precisely on making complexity inaccessible to the user. In this sense, invisible AI is also a form of governance: it organizes trust not by requiring full understanding, but by making the conditions of action feel natural enough that understanding appears unnecessary. A cleaner interface can therefore produce confidence while simultaneously weakening the user’s ability to evaluate the grounds of that confidence.

Creativity as Platform Workflow

Creativity is increasingly shaped by platforms, interfaces, prediction systems, and iterative workflows. The issue is how creative work would be reorganized once generation, editing, optimization, and distribution were folded into a continuous, platform-based process.

Lyle Stevens (Co-Founder and Chief Strategy Officer, Later) framed this shift through the expansion of the creator economy. His talk was explicitly optimistic, presenting AI as a force that lowers barriers to participation and makes content production easier, faster, and more scalable. In his account, AI not only improves output quality but also enables creators to perform better across familiar metrics such as engagement, views, click-throughs, and commerce. At the same time, this democratization does not remove competition. Even with AI assistance, virality remains rare, and creative success is increasingly tied to systems of measurement, trend analysis, and recommendation. Creativity, in this framework, becomes inseparable from platform intelligence: what matters is not only making content, but making content that can be anticipated, ranked, circulated, and optimized within data-driven environments.

A more ambitious vision emerged in Stef Corazza’s (Head of AI Research, Canva) presentation, which placed workflow transformation at the center of creative production. Rather than treating AI outputs as finished assets, Corazza argued for bringing AI directly into the design process as a collaborator that assists users step by step. This was a significant intervention because it pushed against one of the most common frustrations of generative media: the production of “flat,” fixed outputs that are difficult to revise or integrate into broader projects. In this account, creative labor is reorganized as a workflow of prompting, revising, coordinating, and reusing outputs across multiple formats and stages.

Alejandro Matamala-Ortiz (Co-Founder and Chief Design Officer, Runway) extended this discussion by framing AI as a way of reopening the question of who gets to create in the first place. His talk centered on Runway’s founding proposition—“What if anyone in the world could become a filmmaker?”—and argued that the decisive question is not the tool itself, but the creative question posed to it. This formulation was especially important because it shifted the conversation away from technical capability alone and toward a broader redistribution of authorship. At the same time, however, this rhetoric of expanded participation remained embedded within a platform logic. If AI enables more people to create images, videos, and stories, it does so through systems designed, maintained, and structured by companies that define the available workflows. The democratization of creativity is therefore real, but it is not neutral: it is mediated through platforms that shape not only what can be made, but how making itself is imagined.

Speed, Scale, and the Normalization of AI

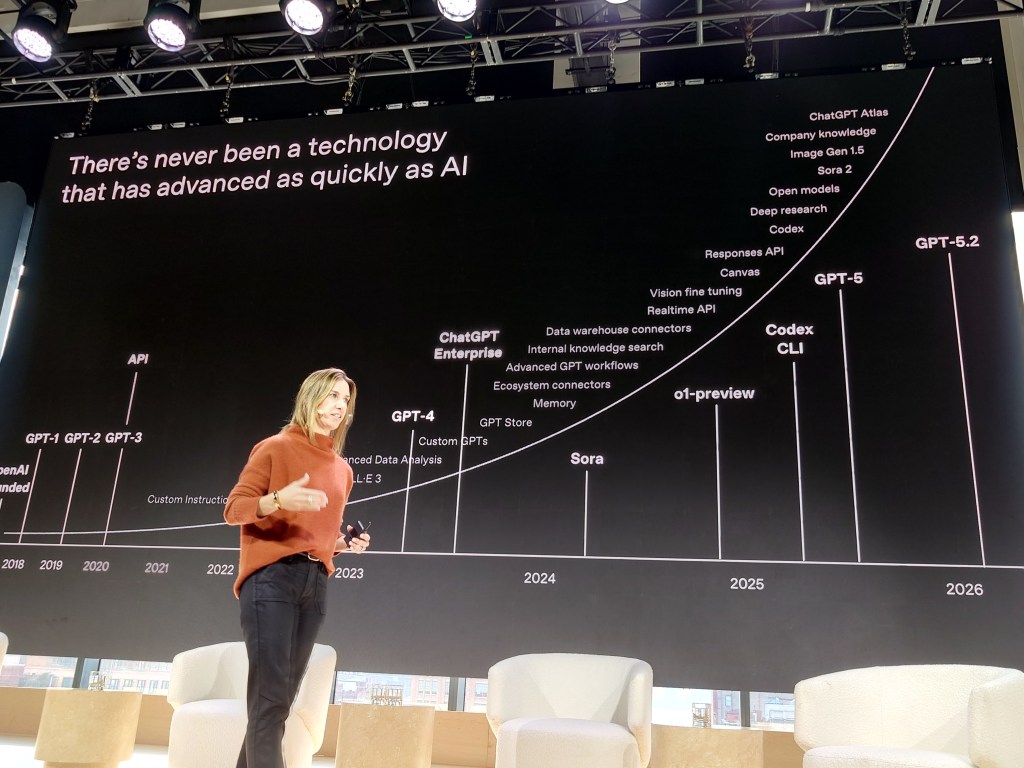

Ashley Kramer (Vice President of Enterprise, OpenAI) emphasized the unusual speed at which AI has entered mass use, noting that while the internet took 7 years and Facebook took 4 years to reach 100 million users, ChatGPT reached that scale in only 2 months.

However, Ash Ashutosh (CEO, Pinecone) complicated this emphasis on speed by questioning the language of productivity that often surrounds AI discourse. Rather than treating AI as a way to turn people into more efficient machines, he suggested that the more useful goal is to shorten the “time to insight” by bringing the right knowledge to the right person at the right moment. Benedict Evans (Independent Analyst) added a longer historical perspective, recalling earlier forms of automation that once seemed novel or disruptive but later became ordinary and nearly invisible. In discussing elevators, for example, he pointed to how processes that once required visible human mediation eventually became so normalized that younger generations no longer recognized them as a distinct technological transformation at all. In this sense, the event’s later discussions repeatedly returned to the question of how AI becomes embedded not only through scale and speed, but also through familiarity: as systems become routine, their mediation becomes less visible even as their influence expands.